A Transparent Self-Sensing Deformable Surface

We present FlexSense, a new thin-film, transparent sensing surface based on printed piezoelectric sensors, which can reconstruct complex deformations without the need for any external sensing, such as cameras. FlexSense provides a fully self-contained setup which improves mobility and is not affected from occlusions. Using only a sparse set of sensors, printed on the periphery of the surface substrate, we devise two new algorithms to fully reconstruct the complex deformations of the sheet, using only these sparse sensor measurements. An evaluation shows that both proposed algorithms are capable of reconstructing complex deformations accurately. We demonstrate how FlexSense can be used for a variety of 2.5D interactions, including as a transparent cover for tablets where bending can be performed alongside touch to enable magic lens style effects, layered input, and mode switching, as well as the ability to use our device as a high degree-of-freedom input controller for gaming and beyond.

Introduction

There has been considerable interest in the area of flexible or deformable input/output (IO) digital surfaces, especially with recent advances in nano-technology, such as flexible transistors, eInk & OLED displays, as well as printed sensors. The promise of such devices is making digital interaction as simple as interacting with a sheet of paper. By bending, rolling or flexing areas of the device, a variety of interactions can be enabled, in a very physical and tangible manner.

Whilst the vision of flexible IO devices has existed for some time, there have been few self-contained devices that enable rich continuous user input. Researchers have either created devices with limited discrete bending gestures, or prototyped interaction techniques using external sensors, typically camera-based vision systems. Whilst demonstrating compelling results and applications for bending-based interactions, the systems suffer from practical and interactive limitations. For example, the bend sensors used in are limited to simple bending of device edges, rather than the complex deformations one expects when interacting naturally with a sheet of paper. In contrast, vision-based systems are not self-contained, are more costly and bulky, and can suffer from occlusions, particularly when the hand is interacting with the surface.

In this paper, we present FlexSense, a transparent thin input surface that is capable of precisely reconstructing complex and continuous deformations, without any external sensing infrastructure. We build on prior work with printed piezoelectric sensors (previously used for touch, gesture and pressure sensing). Our new design uses only a sparse set of piezoelectric sensors printed on the periphery of the surface substrate. A novel set of algorithms fully reconstruct the surface geometry and detailed deformations being performed, purely by interpreting these sparse sensor measurements. This allows an entirely self-contained setup, free of external vision-based sensors and their inherent limitations. Such a device can be used for a variety of applications, including a transparent cover for tablets supporting complex 2.5D deformations for enhanced visualization, mode switching and input, alongside touch; or as a high degree-of-freedom (DoF) input controller.

In summary our contributions are as follows:

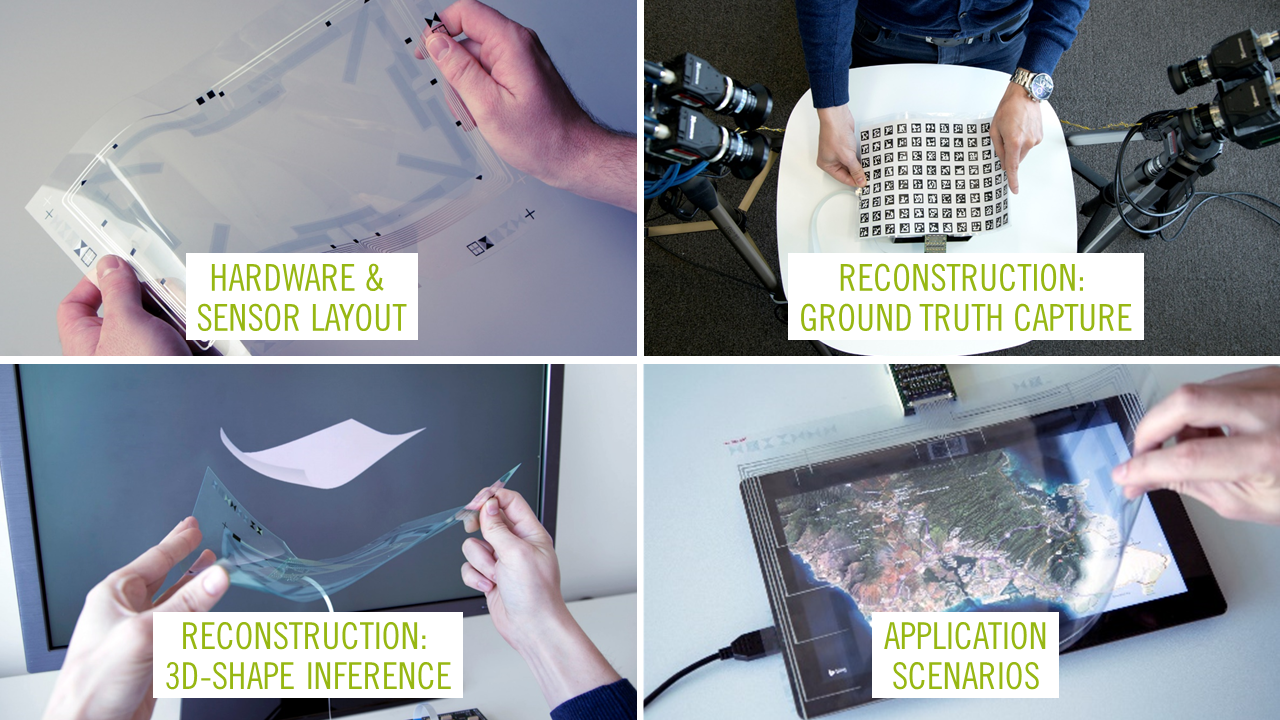

- We present a new sensor layout based on the prior PyzoFlex system, specifically for sensing precise and continuous surface deformations. This previous work demonstrated the use of printed piezoelectric sensors for touch and pressure sensing. In contrast, we present the idea of using these sensors to enable rich, bidirectional bending interactions. This includes the design of a new layout, associated sensor and driver electronics specifically for this purpose.

- Our main contribution are two algorithms that can take measurements from the sparse piezoelectric sensors and accurately reconstruct the 3D shape of the surface. This reconstructed shape can be used to detect a wide-range of flex gestures. The complexity of the deformations afforded by our reconstruction algorithms have yet to be seen with `self-sensing’ (i.e. self-contained) devices, and would typically require external cameras and infrastructure.

- We train and evaluate the different algorithms using a ground-truth multi-camera rig, and discuss the trade-offs between

implementation complexity and reconstruction accuracy. We also compare to a single camera baseline. - Finally, we demonstrate new interaction techniques and applications afforded by such a novel sensor design and reconstruction algorithms. In particular as a cover for tablets where IO is coupled, and as a high DoF input controller.